The Luddite Fallacy Fallacy (or Why Mass Technological Unemployment is Inevitable)

We often hear that technology creates more jobs than it destroys but as technology advances, that trend cannot hold

Ned Ludd probably didn’t exist, but that didn’t stop him from becoming the namesake of a labour movement.

In the late 18th century, before the Luddites were the Luddites, they were skilled, well-regarded, and highly-paid textile workers. They made their livelihood from the traditional craft of handloom weaving.

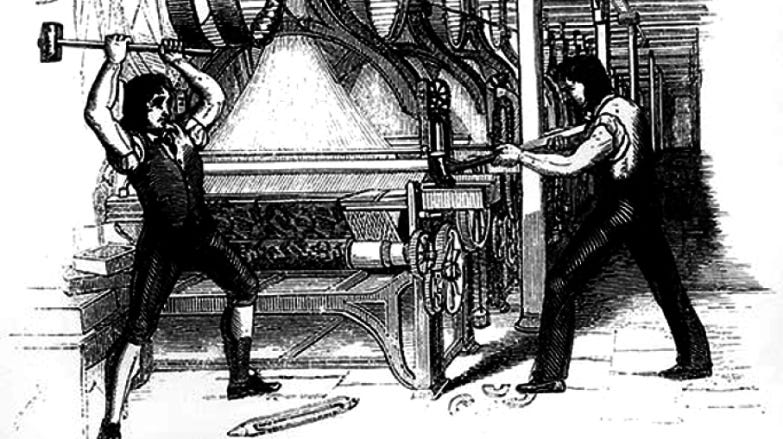

Then came the stocking frame. It was a machine that, with the aid of a somewhat less skilled operator, could do the same job.

The weavers were not happy, here was technology making them redundant. In a fit of rage (so legend has it) one such weaver named Ned Ludd smashed several stocking frames to bits. Other weavers too took to smashing these machines in revolt and left notes in their wake signed, “Ned Ludd.” The Luddites were born.

The Luddite Fallacy

Today, the term Luddite is derogatory. It refers to those who seem to fear or resist technology.

We generally believe that the Luddites were wrong. The argument is twofold.

Technology might eliminate some jobs, but it always creates others. In other words, technology doesn’t reduce overall employment, it just restructures it. In the case of the Luddites, sure they lost their jobs, but the loom operators now had theirs.

Technology makes us more productive which is for the greater good. Higher productivity means we make more for less. This in turn means reduced prices, higher demand, alternative allocation of resources, and so on. In the case of the Luddites, yes the weavers lost their incomes, but everyone else’s could go further. Cheaper clothes meant meant higher demand, which meant more looms, more loom builders, more loom operators, and so on. It also meant higher demand for other things and a positive knock-on effect on the economy as a whole.

Economists were sure enough of these arguments to coin the term, The Luddite Fallacy.

For the Luddites themselves the Luddite Fallacy would have been of very little comfort.

First, regardless of the macroeconomy, the Luddites had still lost their jobs. Growth in GDP matters precious little when one can’t feed, clothe, or house one’s children. Second, no matter how cheap clothes became, the Luddites had little money to buy it with, let alone life’s other necessities. Third, whereas some Luddites might be able to retrain to fill new roles, this wasn’t viable for everyone. Fourth, even if they were able to fill new roles, these, almost perforce, were lower paying than their previous roles as weavers.

Economists generally concede these points. Today, programs exist that try to soften the blow on those made technologically redundant. They’re mostly wholly inadequate, but they exist.

The arguments behind the Luddite Fallacy rest on certain assumptions. First, each job lost is replaced by at least one new job. Second, the collective wages of the new jobs are at least equal to the wages of the old. Historically, these assumptions have held true.

The question is, will they continue to do so?

To answer this question we first have to look at two things:

The nature of work; and

The dynamics of automation

The following rehashes some writing elsewhere.

The Nature of Work

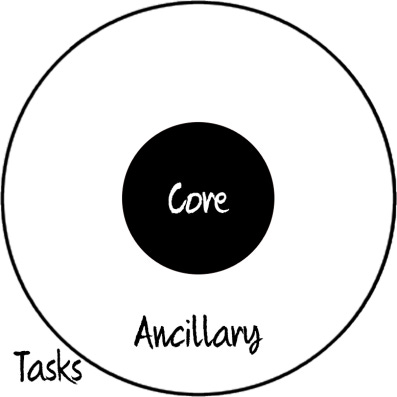

Work consists of Tasks. For instance, a taxi driver needs to find those looking for a ride, operate a vehicle safely, make small talk, and so on. Tasks are either Core or Ancillary. Core tasks are those necessary and sufficient for the work. Without these tasks there is no job. With them, you can get by. Ancillary tasks are important but unessential. For instance, a taxi driver’s core tasks include driving safely from A to B. If you can manage these tasks, you too could be a taxi driver. Ancillary tasks on the other hand might include helping with luggage, making small talk, recommending eateries, and so on.

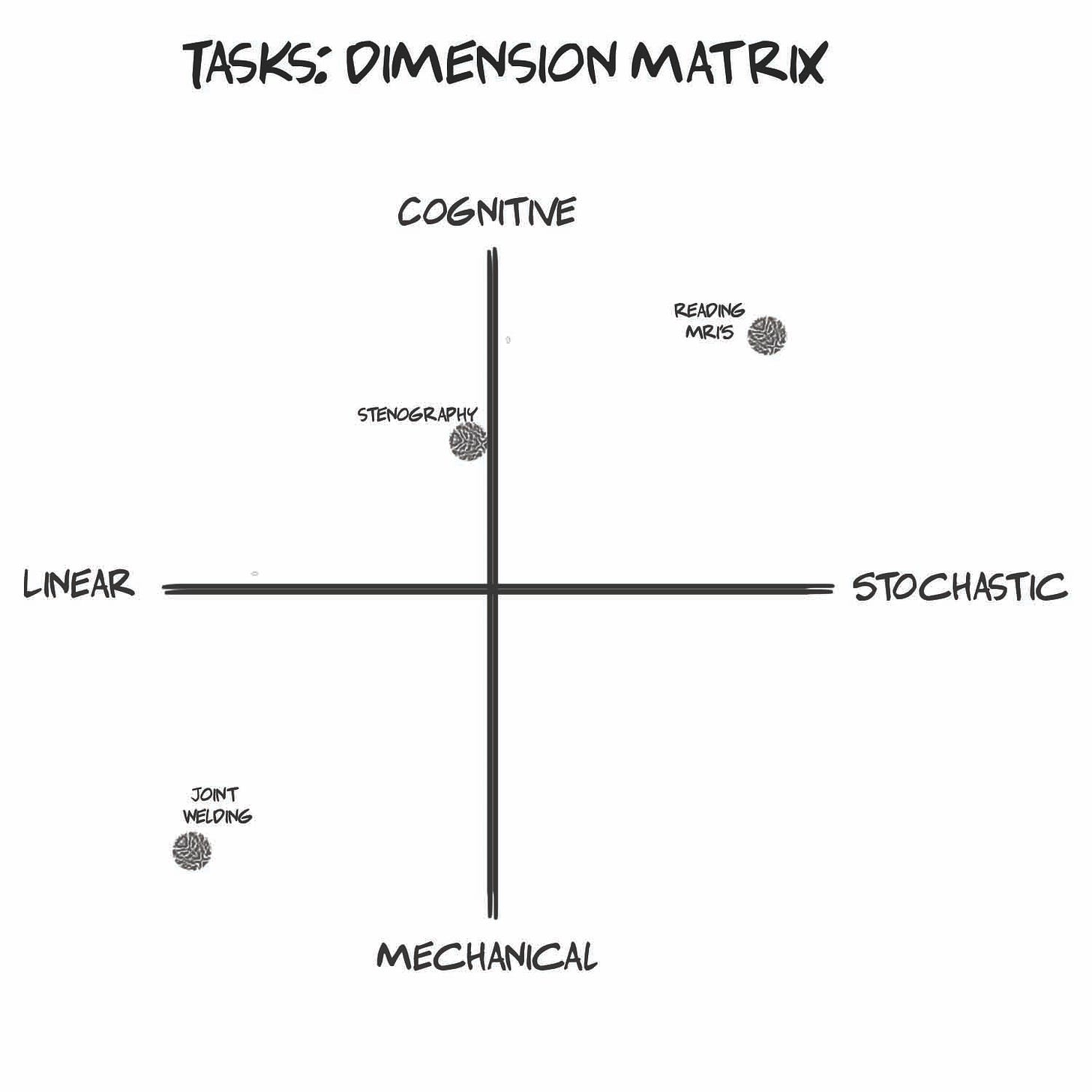

A task also has two dimensions: Cognitive and Mechanical. Put differently, our jobs involve thinking and doing. Some involve more of one than the other, but virtually all involve at least some of each. A poet might think more than do while a bricklayer might do more than think. A nurse might need a fair bit of both. This is an oversimplification but you get the point.

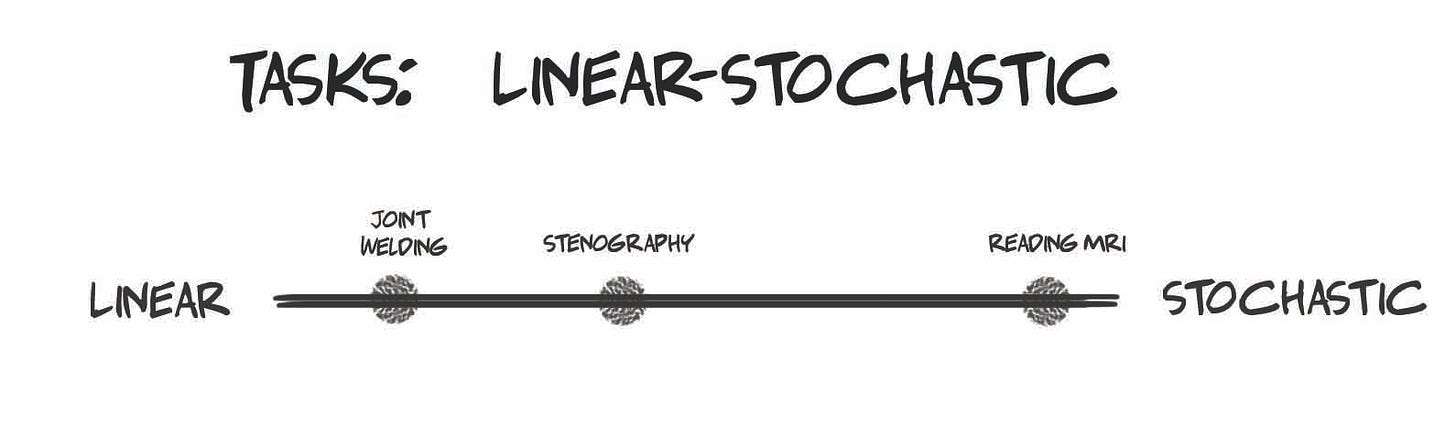

Each task also sits on a spectrum of Linear to Stochastic. Linear tasks are repetitive and predictable while stochastic tasks are variable and unpredictable. For instance, a food packer in an assembly line is mostly linear. Michael Jordan, on the other hand, was stochastic.

Of course, the two dimensions can be overlaid into a matrix.

The Dynamics of Automation

In the past, technology replaced Linear tasks. In particular, it began with Mechanical-Linear tasks. Again, the stocking frame kicked out weavers. When computers came around, Cognitive-Linear tasks were next. The computer spreadsheet, for example, bumped off countless accounting clerks.

Today, technology is graduating from Linear to Stochastic tasks. Here things are moving in reverse. Cognitive-Stochastic tasks are first in line. Mechanical-Stochastic tasks are not far behind. Driverless cars are an early example. Put differently, we’re closer to building an AI Shakespeare than a robot Jordan.

Human capacities are essentially fixed, meaning that what a human is capable of is unlikely to change anytime soon. We can move, deduce, infer, see, hear, emote, and so on. A person can learn new skills but these are bound by our biology. We can expand our capacities with technology, but not without. Our inherent capacities are effectively stagnant.

On the other hand, machine capacities are dynamic. In fact, they’re dynamic only in one direction: growth. Barring a cataclysm we can expect machines to continue to pocket capacities that were once uniquely ours.

First computers beat us at chess, then Go, then Dota. Again, once won, barring some extinction-level event, these capacities cannot be lost.

These dynamics, our stagnation and machine evolution imply that technology will catch up to us.

They also imply we’ll be excelled.

Why will machines almost certainly eventually excel us? First, the forward arrow of time is very long - we have a lot of time for this technology to emerge. Second, human intelligence is material. This means it’s not magic, it’s in our skulls. It is therefore subject to study, reverse engineering, emulation, and the like. Third, we’ve already made progress in this direction. Fourth, our rate of progress has been swift. It isn’t linear, each advance enables the next. Fifth, we don’t need to fully understand how the brain works to emulate it. We made light bulbs before understanding electrons.

In sum, probability favours the eventual emergence of superintelligent machines. Many in the field think it will happen within the century. Recent developments suggest it will happen far sooner.

But where job loss is concerned, superhuman is overkill.

“Almost” Counts

Brandy was wrong. Almost does count. At least where technological unemployment is concerned.

Remember that a job consists of core and ancillary tasks. Core tasks are essential to the role, ancillary are not. A machine does not have to be capable of all tasks. If it is capable of almost all, specifically core tasks, the role is at risk of automation. Only “at risk” because an economic case for automation must still be had.

We can depict the sets of machine capacities and role responsibilities in a Venn Diagram. On the left are all machine capacities and on the right, role responsibilities. The circle on the right remains stagnant. The circle on the left slowly creeps to the right. If and when core tasks enter the intersection, the role becomes automatable.

What about ancillary tasks? Those tasks that comprise the remainder of the role but fall outside the contemporary capacities of AI will have one of two outcomes:

Done without (Defrilling)

Done by less skilled labour (Deskilling)

Crucially, as AI capacities increase, the extent to which ancillary tasks will themselves be subject to automation will also increase.

Finally, technology has a well-established history of restructuring labour and spawning new roles (think UX designers, penetration testers, etc.). It is these new roles that have always comforted the Luddite Fallacy adherents. But we have shown that, increasingly, these new roles too will fall within the purview of machines.

The Luddite Fallacy Fallacy

With this, we see that the Luddite Fallacy, which in effect is the notion that mass technological unemployment can be dismissed, is itself a fallacy. We can summarize this Luddite Fallacy Fallacy as follows:

As machine capacities grow, more and more roles will be subject to automation.

It follows that among new jobs created by technology, more and more will be subject to automation.

It further follows that although value will be created, it will not be in the form of wage growth.

With a long enough time horizon, the Luddite Fallacy doesn’t hold. It’s a fallacy itself.

(The Problem with Hybrids)

As a quick aside, Elon Musk and others believe the answer is to merge with machines. A sort of “if you can’t beat them, join them” line of reasoning. By so doing they argue that rather than be replaced by machines we, in some sense, become the machines.

Augmenting ourselves with technology is an entirely defensible idea. We do this routinely today and are likely to integrate with technology far more intimately in the future.

Quite apart from its questionable desirability, this line of reasoning has a fundamental flaw: our biology is a bottleneck. Our nerves can only conduct so quickly. Our craniums can only get so big. Our neurotransmitters can only be replenish so fast. Gene expression only happens at a certain rate. And so on and so on.

Ultimately, our biology sets a ceiling on how much function we can extract from technology through hybridization. Purely artificial systems would be free of the intrinsic constraints that limit purely biological or hybrid systems.

Merging with technology is therefore not a compelling, long-term solution for dealing either with the Luddite Fallacy Fallacy in particular nor with the risk of human supersedence more generally.

Summary

Although we often hear that technology creates more jobs than it dhumestroys, this is unlikely to hold indefinitely. Machine capabilities are constantly expanding. Human capabilities, on the other hand are stagnant in sub-evolutionary timescales. It is true that as certain roles are subsumed by automation other roles might be created. But there is no guarantee that those new roles will be filled by humans. In fact, as the capabilities of machines expand the likelihood new roles will themselves be subject to automation will increase. It follows that there will come a time when virtually all work could be done by machines. Technological unemployment isn’t a fallacy if and when the technology is sufficiently advanced. Well before the point, phenomenon such as de-skilling will take place. Highly technical skills were a historic moat against competition by other human laborers or machines. Jobs that seem “too complex” can be deconstructed and a machine need only be capable of core tasks to threaten a role with automation. What’s more, even complex, stochastic tasks are increasingly within machine reach. Human-machine integration is unlikely to work in the long-run as our biology acts as a bottleneck. New solutions, mostly social and political, are called for.